Gathering Text Entry Metrics on Android Devices

Contents:

Contents:

Overview

Summary: Researchers required software to record performance metrics for text entry usability studies. I designed and developed a mobile app that facilitated ethnographic studies, and was agnostic to the input method being evaluated.

Users and Stakeholders: Initially, I thought only my research group and I would use my app. (It later grew a global userbase of academic and industry researchers.) Additionally, I needed buy-in from my supervisor for my research projects.

Pain Points: Existing solutions were desktop-based, or required a dedicated app for each novel keyboard and text entry technique.

Scope and Constraints: At the time of design, Android was the only mainstream mobile platform that allowed installation of third-party keyboards. Therefore, an iOS version was not practical, as it did not allow custom keyboards. This was my first mobile app, and I needed to learn Android development.

Methods Used: Usability-lab studies (i.e., user testing), usability benchmarking, competitive analysis, quantitative surveys, metrics analysis, log analysis.

Process

Discover Requirements

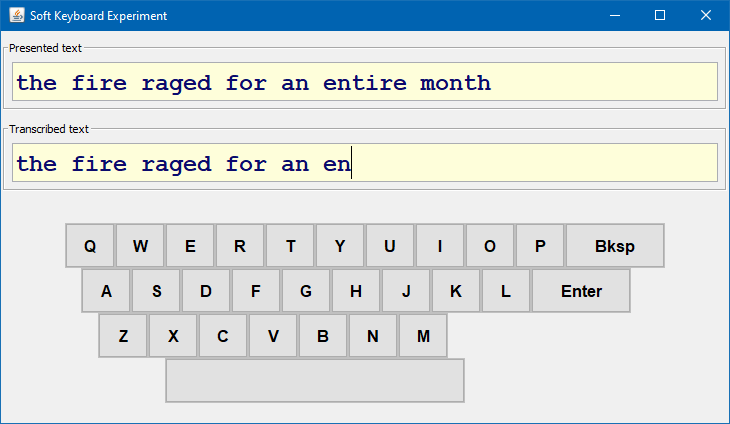

Tools existed to conduct text entry user studies on PCs. They had simple UIs, and presented phrases for the user to type. Regardless of the tool, the presented phrases typically came from a set published by my supervisor, Scott, which had become the standard within the research community. Scott modelled the phrase set after a repository of English prose, derived from published books and articles. [ Scott's Experiment Software]

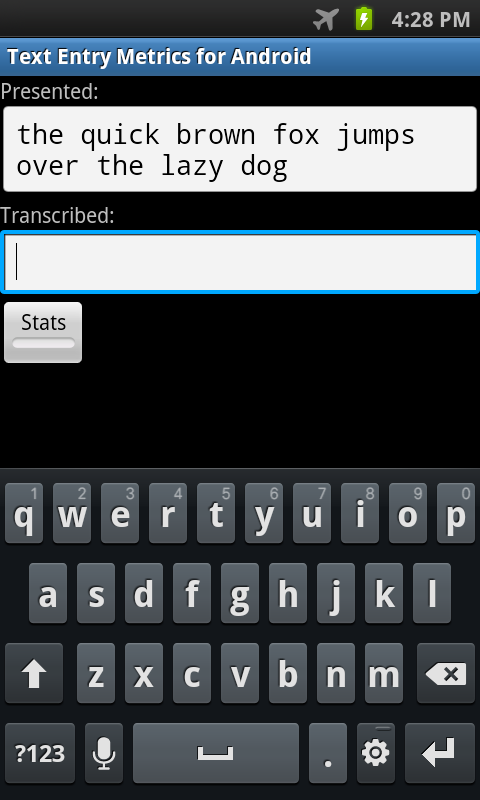

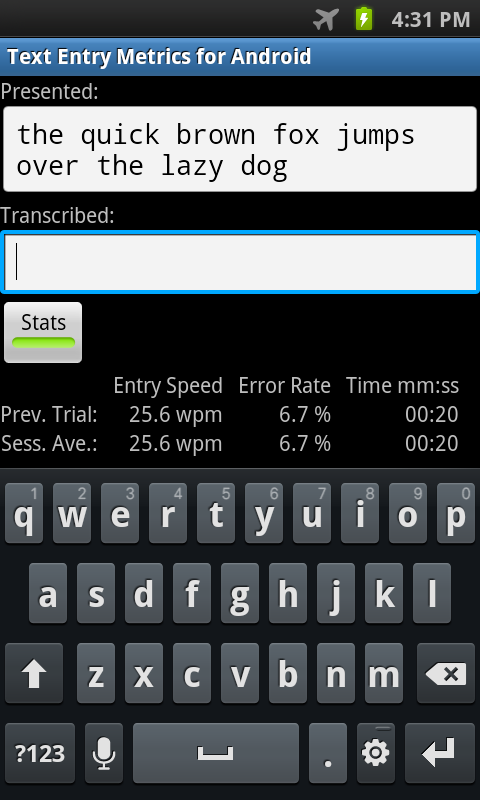

For my mobile smartphone app, I wanted to retain a simplistic user interface and Scott's phrase set. However, text entry on mobile devices is often less formal. It contains, smilies, slang, shorthand, handles, and hashtags. I needed to curate or create a phrase set reflective of these tasks. Once the user enters the presented phrase, the app needs to record events and performance metrics (e.g., words-per-minute speed, error rate accuracy) to logs for subsequent analysis.

- One or more phrase sets that represent mobile text entry tasks (for use in user studies)

- Mobile app that facilitates user studies by presenting phrases and recording text entry metrics

- Log files that record low-level, time-stamped events, and higher-level, calculated metrics for speed and error rate

Explore and Prototype

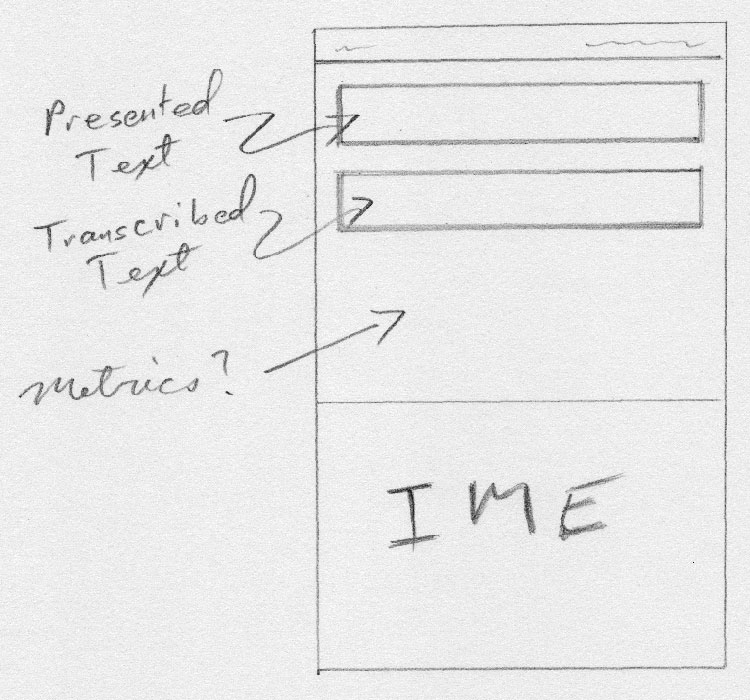

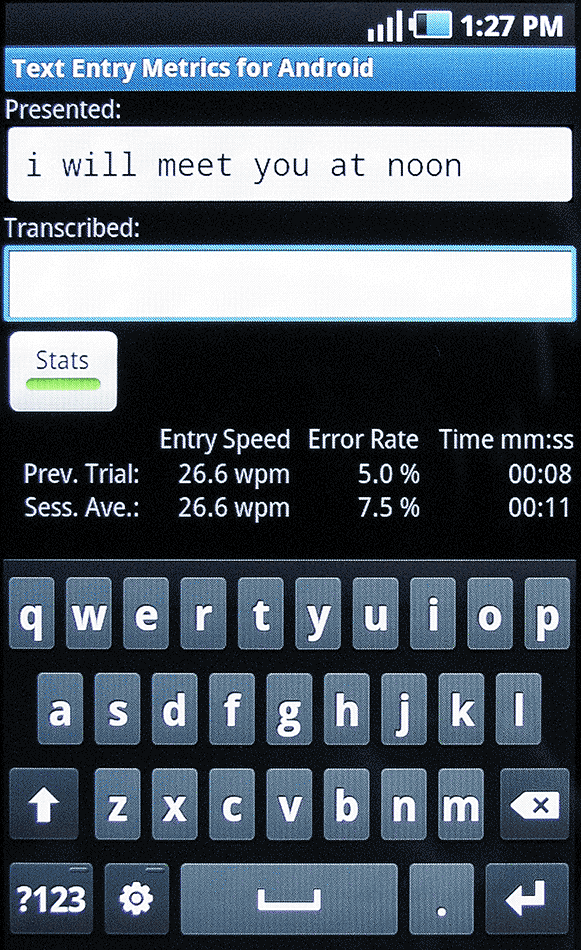

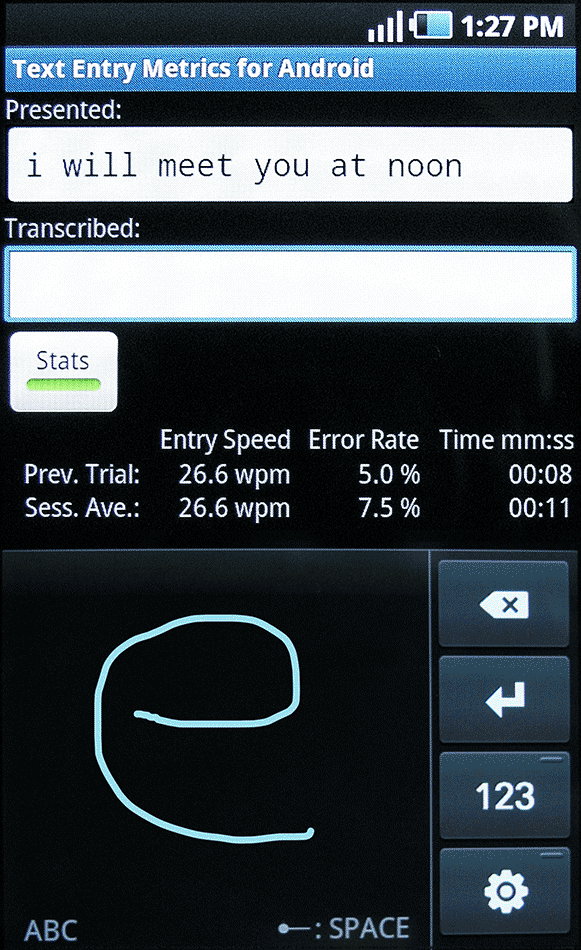

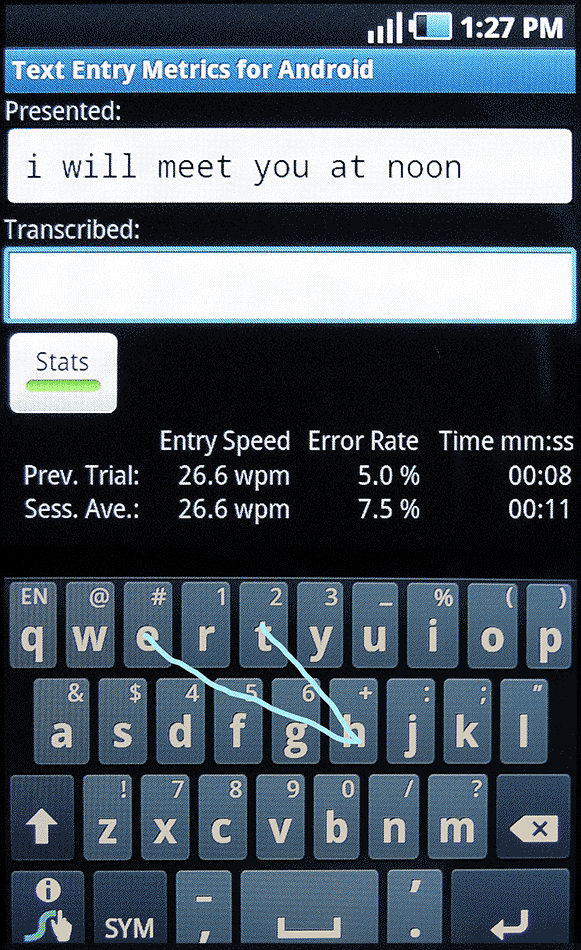

Of the two major smartphone platforms, only Android allowed users to program and create their own keyboards, generically called "Input Method Editors" (IMEs). This was my first mobile app, called "TEMA" (Text Entry Methods on Android). Thankfully, I already knew Java, so learning Android development was straightforward, but still time-consuming. Based on the simplistic UI of existing desktop tools, I sketched a wireframe with pencil and paper.

Existing apps for evaluating novel keyboards integrated the keyboard interface into the evaluation app. This meant rewriting the evaluation app for each new keyboard, and not being able to evaluate exiting third-party keyboards.

I wanted TEMA to be flexible and agnostic to the IME, but this meant having no direct communication with the IME code. Consequently, TEMA could not detect non-text events internal to the IME (e.g., activating or deactivating the Shift-key but not typing anything). Android enforced this for security reasons (e.g., to prevent stealing of passwords entered with the IME). Instead, I designed TEMA so that user study participants typed in a text area within the app, and TEMA monitored and recorded changes to the text field to calculate key performance metrics.

For phrases characteristic of mobile text entry, I found data sets of actual emails, text messages, and tweets. For each collection, I calculated its character distribution (i.e., for each character, how often did it appear within the entire collection?) using a Java program I wrote. I anonymized the phrases by substituting names, email addresses, and phone numbers. I also filtered out phrases with profanities or more than 35 characters (to match Scott's maximum phrase length). Using another Java program I wrote, I selected approximately 1000 phrases with maximum correlation with the original collection's character distribution, and arrived at three phrase sets characterizing email, texting, and tweeting.

Evaluate and Iterate

I conducted a small user study with two goals: usability testing of TEMA, and benchmark testing three existing third-party IMEs. Although the benchmarking results are not pertinent to this case study, the user study revealed some oversights in log formatting, and the need to log device parameters, such as IME name, Android version, and screen size. It also highlighted the need to implement "safeguards". Sometime, study participants would accidentally press the menu button on the smartphone's UI. Other times, participants would haphazardly enter text to complete the study quickly and receive their payment for participation. Both situations negatively impacted study validity. Subsequent iterations of TEMA disabled the menu button, and only allowed progression within the study if the participant surpassed a user-definable threshold for typing accuracy.

One pain point that I heard from other users of TEMA was the absence of support for IME-specific metrics. I was able to find a way to allow the IME to pass low-level events to TEMA for logging. The IME could use Android's inter-process notification framework to issue notifications. TEMA would register as a listener for these notifications, and log the events passed to it from the IME. This required the user of TEMA to modify the source code of the IME. But since most TEMA users were evaluating keyboards they (or their research group) developed, this requirement was inconsequential. TEMA users evaluating third-party IMEs could still gather the typical key performance metrics without any modification to TEMA or the IME.

Outcomes, Insights, and Deliverables

TEMA has been very well received by researchers in both academia and industry. Although I no longer actively develop the app, I still get requests for it. I provide it freely to academic researchers at universities world-wide.

In summary, here are the project deliverables:

- An Android app to facilitate ethnographic studies on mobile text entry

- Phrase sets that characterize specific mobile text-based communication, to facilitate usability studies and benchmarking

And here are the insights and outcomes:

- A realization that promoting and sharing simple, versatile software tools can yield far-reaching research collaborations

- Four academic publications, three of them at the prestigious ACM SIGCHI conference [links to PDFs]

- A user base consisting of industry researchers at Google, Logitech, and Oculus, and over 65 academic researchers at the following institutions:

Aalto University, Finland

Brock University, Canada

Carnegie Mellon University, USA

Colorado Technical University, USA

Georgia Institute of Technology, USA

Indian Institute of Technology Bombay, India

INHA University, South Korea

KAIST, South Korea

Louisiana State University, USA

McMaster University, Canada

National Open University of Nigeria, Nigeria

Ryerson University, Canada

Seoultech University, South Korea

Shaanxi Normal University, China

Simon Fraser University, Canada

Stuttgart Media University, Germany

Tsinghua University, China

Universität Regensburg, Germany

University College London, UK

University of Bremen, Germany

University of Bristol, UK

University of Canterbury, New Zealand

University of Central Lancashire, UK

University of Dundee, UK

University of Glasgow, UK

University of Hamburg, Germany

University of Hildesheim, Germany

University of Lorraine, France

University of Osnabrück, Germany

University of Regensburg, Germany

University of Salerno, Italy

University of Strathclyde, UK

University of Stuttgart, Germany

University of Tampere, Finland

University of Toronto, Canada

University of Washington, USA

University of Waterloo, Canada

University Putra Malaysia, Malaysia

Utrecht University, Netherlands

Vienna University of Technology, Austria

Vrije Universiteit Amsterdam, Amsterdam

Wichita State University, USA

York University, Canada

Zhejiang University, China